A brain signal is tiny. Messy. Electrical noise mixed with meaning. And yet, scientists can sometimes pull enough pattern out of that noise to do something wild, like move a cursor, control a robotic arm, or generate speech from neural activity. That’s the promise behind a brain computer interface.

But the promise comes with a reality check. Brains aren’t keyboards. The signals drift. People get tired. Sensors pick up muscle movement and blinking. And the brain itself changes day to day. So the science is less “mind reading” and more “careful decoding with a lot of engineering.”

This guide explains how BCIs work, how neural mapping fits in, what’s actually possible today, and what questions matter as the field keeps accelerating.

A brain computer interface is a system that measures brain activity, interprets patterns, and turns them into commands for a device. That device could be a computer, a wheelchair, a robotic limb, or software that helps communication.

Most BCIs follow the same pipeline:

That feedback loop is crucial. Users learn the system, and the system learns the user. It’s training on both sides, not just the computer side.

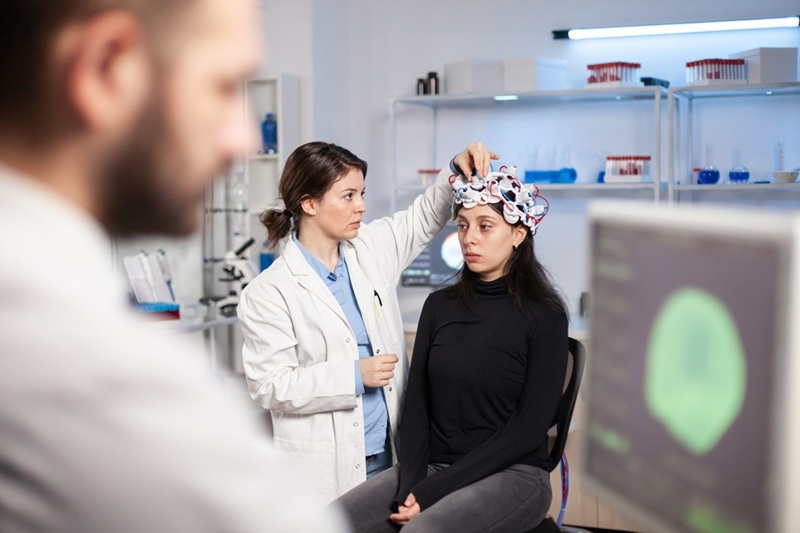

Neural interface technology usually falls into two buckets: non-invasive and invasive. Non-invasive systems measure brain activity from outside the skull. They’re safer and easier to use, but signals are weaker and less precise.

Invasive systems involve sensors placed on or in the brain. These can capture richer signals, but they require surgery and long-term medical monitoring. There’s also a middle zone, like sensors placed on the surface of the brain, which can offer better signal quality than scalp sensors while being less penetrating than deep implants. Each approach comes with tradeoffs in accuracy, risk, and practicality.

BCIs don’t work without brain mapping research. Mapping is how scientists connect “this pattern of activity” with “this action or intention.” It can mean mapping movement areas, speech-related regions, or sensory processing zones.

Mapping happens through:

Neural mapping isn’t a one-and-done thing. The brain adapts. The body changes. Even electrode positions or contact quality can shift. So mapping is often a continuous process, not a single snapshot.

The field isn’t just neuroscience. It’s also human machine interaction science. A BCI user isn’t passively being decoded. They’re actively learning how to produce controllable signals, often by imagining movement or focusing attention in specific ways.

Good BCI design makes the loop easier:

If the interface is clunky, the user has to “work” too hard to control it. That fatigue limits real-world usefulness. If the interface is smooth, learning accelerates.

The most meaningful progress right now is in BCI medical applications, especially for people with paralysis or severe motor impairment. The goal is often communication and control: typing, cursor movement, device control, or assistive robotics.

In medical contexts, BCIs can support:

These applications are high-stakes, so reliability and safety matter more than flashy demos. A system that works 90 percent of the time in a lab but fails unpredictably at home is not good enough.

A lot of neuroscience innovation 2026 isn’t a single breakthrough. It’s the stacking of improvements:

In plain terms, the field is getting better at translating “noisy biology” into “usable control.” That’s hard. But the trajectory is moving in the right direction.

The second look at neural interface technology comes down to a tough trade: precision versus risk. Non-invasive systems are more accessible and safer. They’re also limited by physics, because the skull filters and blurs electrical signals.

Invasive systems can offer higher resolution signals, which can improve control and speed. But surgery, long-term device stability, infection risk, and medical follow-up are serious considerations.

That’s why the most impactful use cases today lean medical. In that setting, higher risk may be justified if it significantly improves quality of life.

The second mention of brain mapping research matters because drift is one of the most stubborn problems. A decoder trained today may work less well next week because:

Researchers tackle drift with adaptive models that update over time, and with training protocols that help users maintain stable signal patterns. The aim is long-term usability, not short-term performance.

The second mention of BCI medical applications is where reality shows up. Beyond “it works,” a medical BCI must be:

Medical BCIs aren’t only science problems. They’re healthcare system problems too. Training, access, support, and follow-up care determine whether the technology reaches the people who need it.

The second mention of human machine interaction science connects directly to ethics, because BCIs deal with signals that feel personal. Even when the system isn’t reading thoughts, it’s still collecting neural data. That raises questions about:

A helpful framing is this: the more intimate the data source, the stronger the guardrails need to be. That means clear policies, strong security, and a simple rule: the user should stay in control.

The second mention of neuroscience innovation 2026 deserves a reality check. Hype tends to inflate expectations, especially around “telepathy” style marketing.

BCIs are not mind reading in the everyday sense. Most decoders work by linking specific neural patterns to specific trained tasks. They’re powerful, but not magical. If a headline claims “BCI reads thoughts,” it’s usually compressing a complex, controlled experiment into a dramatic sentence.

A healthier expectation is: BCIs are improving tools for communication and control, especially in medical contexts. Consumer versions may expand over time, but safety and privacy will matter even more there.

A BCI is:

A BCI isn’t:

When people understand that difference, the field becomes more exciting, not less. It’s real engineering and real neuroscience solving hard problems.

Most BCIs do not read thoughts in a general way. They decode trained signal patterns tied to specific tasks, like cursor movement or imagined speech.

Yes, especially for research, training, and some assistive applications, but they usually offer less precision than invasive systems because signals are measured through the skull.

The strongest progress is in communication and device control for people with paralysis, plus rehabilitation tools that connect brain intent signals with movement training.

This content was created by AI